使用Milvus和Llama-agents构建更强大的Agent系统

代理(Agent)系统能够帮助开发人员创建智能的自主系统,因此变得越来越流行。大语言模型(LLM)能够遵循各种指令,是管理 Agent 的理想选择,在许多场景中帮助我们尽可能减少人工干预、处理更多复杂任务。例如,Agent 系统解答客户咨询的问题,甚至根据客户偏好进行交叉销售。

本文将探讨如何使用 Llama-agents 和 Milvus 构建 Agent 系统。通过将 LLM 的强大功能与 Milvus 的向量相似性搜索能力相结合,我们可以创建智能且高效、可扩展的复杂 Agent 系统。

本文还将探讨如何使用不同的 LLM 来实现各种操作。对于较简单的任务,我们将使用规模较小且更价格更低的 Mistral Nemo 模型。对于更复杂的任务,如协调不同 Agent,我们将使用 Mistral Large 模型。

01.

Llama-agents、Ollama、Mistral Nemo 和 Milvus Lite 简介

-

Llama-agents:LlamaIndex 的扩展,通常与 LLM 配套使用,构建强大的 stateful、多 Actor 应用。

-

Ollama 和 Mistral Nemo: Ollama 是一个 AI 工具,允许用户在本地计算机上运行大语言模型(如 Mistral Nemo),无需持续连接互联网或依赖外部服务器。

-

Milvus Lite: Milvus 的轻量版,您可以在笔记本电脑、Jupyter Notebook 或 Google Colab 上本地运行 Milvus Lite。它能够帮助您高效存储和检索非结构化数据。

Llama-agents 原理

LlamaIndex 推出的 Llama-agents 是一个异步框架,可用于构建和迭代生产环境中的多 Agent 系统,包括多代理通信、分布式工具执行、人机协作等功能!

在 Llama-agents 中,每个 Agent 被视为一个服务,不断处理传入的任务。每个 Agent 从消息队列中提取和发布消息。

02.

安装依赖

第一步先安装所需依赖。

! pip install llama-agents pymilvus python-dotenv

! pip install llama-index-vector-stores-milvus llama-index-readers-file llama-index-embeddings-huggingface llama-index-llms-ollama llama-index-llms-mistralai # This is needed when running the code in a Notebook

import nest\_asyncio

nest\_asyncio.apply() from dotenv import load\_dotenv

import os load\_dotenv() 03.

将数据加载到 Milvus

从 Llama-index 上下载示例数据。其中包含有关 Uber 和 Lyft 的 PDF 文件。

!mkdir -p 'data/10k/'

!wget 'https://raw.githubusercontent.com/run-llama/llama\_index/main/docs/docs/examples/data/10k/uber\_2021.pdf' -O 'data/10k/uber\_2021.pdf'

!wget 'https://raw.githubusercontent.com/run-llama/llama\_index/main/docs/docs/examples/data/10k/lyft\_2021.pdf' -O 'data/10k/lyft\_2021.pdf' 现在,我们可以提取数据内容,然后使用 Embedding 模型将数据转换为 Embedding 向量,最终存储在 Milvus 向量数据库中。本文使用的模型为 bge-small-en-v1.5 文本 Embedding 模型。该模型较小且资源消耗量更低。

接着,在 Milvus 中创建 Collection 用于存储和检索数据。本文使用 Milvus 轻量版—— Milvus Lite。Milvus 是一款高性能的向量向量数据库,提供向量相似性搜索能力,适用于搭建 AI 应用。仅需通过简单的 pip install pymilvus 命令即可快速安装 Milvus Lite。

PDF 文件被转换为向量,我们将向量数据库存储到 Milvus 中。

from llama\_index.vector\_stores.milvus import MilvusVectorStore

from llama\_index.core import Settings

from llama\_index.embeddings.huggingface import HuggingFaceEmbedding from llama\_index.core import SimpleDirectoryReader, VectorStoreIndex, StorageContext, load\_index\_from\_storage

from llama\_index.core.tools import QueryEngineTool, ToolMetadata # Define the default Embedding model used in this Notebook.

# bge-small-en-v1.5 is a small Embedding model, it's perfect to use locally

Settings.embed\_model = HuggingFaceEmbedding( model\_name="BAAI/bge-small-en-v1.5"

) input\_files=\["./data/10k/lyft\_2021.pdf", "./data/10k/uber\_2021.pdf"\] # Create a single Milvus vector store

vector\_store = MilvusVectorStore( uri="./milvus\_demo\_metadata.db", collection\_name="companies\_docs" dim=384, overwrite=False,

) # Create a storage context with the Milvus vector store

storage\_context = StorageContext.from\_defaults(vector\_store=vector\_store) # Load data

docs = SimpleDirectoryReader(input\_files=input\_files).load\_data() # Build index

index = VectorStoreIndex.from\_documents(docs, storage\_context=storage\_context) # Define the query engine

company\_engine = index.as\_query\_engine(similarity\_top\_k=3) 04.

定义工具

我们需要定义两个与我们数据相关的工具。第一个工具提供关于 Lyft 的信息。第二个工具提供关于 Uber 的信息。在后续的内容中,我们将进一步探讨如何定义一个更广泛的工具。

# Define the different tools that can be used by our Agent.

query\_engine\_tools = \[ QueryEngineTool( query\_engine=company\_engine, metadata=ToolMetadata( name="lyft\_10k", description=( "Provides information about Lyft financials for year 2021. " "Use a detailed plain text question as input to the tool." ), ), ), QueryEngineTool( query\_engine=company\_engine, metadata=ToolMetadata( name="uber\_10k", description=( "Provides information about Uber financials for year 2021. " "Use a detailed plain text question as input to the tool." ), ), ),

\] 05.

使用 Mistral Nemo 设置 Agent

我们将使用 Mistral Nemo 和 Ollama 限制资源用量、降低应用成本。Mistral Nemo + Ollama 的组合能够帮助我们在本地运行模型。Mistral Nemo 是一个小型 LLM,提供高达 128k Token 的大上下文窗口,这在处理大型文档时非常有用。此外,该 LLM 还经过微调,可以遵循精确的推理指令、处理多轮对话和生成代码。

from llama\_index.llms.ollama import Ollama

from llama\_index.core.agent import AgentRunner, ReActAgentWorker, ReActAgent # Set up the agent

llm = Ollama(model="mistral-nemo", temperature=0.4)

agent = ReActAgent.from\_tools(query\_engine\_tools, llm=llm, verbose=True) # Example usage

response = agent.chat("Compare the revenue of Lyft and Uber in 2021.")

print(response) 生成响应如下所示:

\> Running step 7ed275f6-b0de-4fd7-b2f2-fd551e58bfe2. Step input: Compare the revenue of Lyft and Uber in 2021.

Thought: The current language of the user is: English. I need to use tools to help me answer the question.

Action: lyft\_10k

Action Input: {'input': "What was Lyft's total revenue in 2021?"}

huggingface/tokenizers: The current process just got forked, after parallelism has already been used. Disabling parallelism to avoid deadlocks...

To disable this warning, you can either: - Avoid using \`tokenizers\` before the fork if possible - Explicitly set the environment variable TOKENIZERS\_PARALLELISM=(true | false)

Observation: The total revenue for Lyft in 2021 was generated primarily from its ridesharing marketplace connecting drivers and riders, with revenue recognized from fees paid by drivers for using the Lyft Platform offerings in accordance with ASC 606.

\> Running step 33064fd3-3c3a-42c4-ab5a-e7ebf8a9325b. Step input: None

Thought: I need to compare the revenue of Lyft and Uber in 2021.

Action: uber\_10k

Action Input: {'input': "What was Uber's total revenue in 2021?"}

Observation: $17,455

\> Running step 7eacfef4-d9da-4cbf-ac07-18f2ff6a3951. Step input: None

Thought: I have obtained Uber's total revenue for 2021. Now, I need to compare it with Lyft's.

Action: lyft\_10k

Action Input: {'input': "What was Lyft's total revenue in 2021?"}

Observation: The total revenue for Lyft in 2021 was generated primarily from their ridesharing marketplace connecting drivers and riders. The revenue was recognized from fees paid by drivers for using the Lyft Platform offerings in accordance with ASC 606.

\> Running step 88673e15-b74c-4755-8b9c-2b7ef3acea48. Step input: None

Thought: I have obtained both Uber's and Lyft's total revenues for 2021. Now, I need to compare them.

Action: Compare

Action Input: {'Uber': '$17,455', 'Lyft': '$3.6 billion'}

Observation: Error: No such tool named \`Compare\`.

\> Running step bed5941f-74ba-41fb-8905-88525e67b785. Step input: None

Thought: I need to compare the revenues manually since there isn't a 'Compare' tool.

Answer: In 2021, Uber's total revenue was $17.5 billion, while Lyft's total revenue was $3.6 billion. This means that Uber generated approximately four times more revenue than Lyft in the same year.

Response without metadata filtering:

In 2021, Uber's total revenue was $17.5 billion, while Lyft's total revenue was $3.6 billion. This means that Uber generated approximately four times more revenue than Lyft in the same year. 06.

使用 Milvus 进行元数据过滤

虽然为每个公司的文档定义一个工具代理非常方便,但如果需要处理的文档很多,这种方法并不具备良好的扩展性。更好的解决方案是使用 Milvus 提供的元数据过滤功能。我们可以将来自不同公司的数据存储在同一个 Collection 中,但只检索特定公司的相关数据,从而节省时间和资源。

以下代码展示了如何使用元数据过滤功能:

from llama\_index.core.vector\_stores import ExactMatchFilter, MetadataFilters # Example usage with metadata filtering

filters = MetadataFilters( filters=\[ExactMatchFilter(key="file\_name", value="lyft\_2021.pdf")\]

) filtered\_query\_engine = index.as\_query\_engine(filters=filters) # Define query engine tools with the filtered query engine

query\_engine\_tools = \[ QueryEngineTool( query\_engine=filtered\_query\_engine, metadata=ToolMetadata( name="company\_docs", description=( "Provides information about various companies' financials for year 2021. " "Use a detailed plain text question as input to the tool." ), ), ),

\] # Set up the agent with the updated query engine tools

agent = ReActAgent.from\_tools(query\_engine\_tools, llm=llm, verbose=True) 我们的检索器将过滤数据,仅考虑属于 lyft_2021.pdf文档的部分数据。因此,我们应该是搜索不到 Uber 相关的信息和内容的。

try: response = agent.chat("What is the revenue of uber in 2021?") print("Response with metadata filtering:") print(response)

except ValueError as err: print("we couldn't find the data, reached max iterations") 让我们测试一下。当我们针对 Uber 2021 年的公司收入进行提问时,Agent 没有检索出结果。

Thought: The user wants to know Uber's revenue for 2021.

Action: company\_docs

Action Input: {'input': 'Uber Revenue 2021'}

Observation: I'm sorry, but based on the provided context information, there is no mention of Uber's revenue for the year 2021. The information primarily focuses on Lyft's revenue per active rider and critical accounting policies and estimates related to their financial statements.

\> Running step c0014d6a-e6e9-46b6-af61-5a77ca857712. Step input: None 但当我们针对 Lyft 2021 年的公司收入进行提问时,Agent 能够检索出正确的答案。

try: response = agent.chat("What is the revenue of Lyft in 2021?") print("Response with metadata filtering:") print(response)

except ValueError as err: print("we couldn't find the data, reached max iterations") 返回结果如下:

\> Running step 7f1eebe3-2ebd-47ff-b560-09d09cdd99bd. Step input: What is the revenue of Lyft in 2021?

Thought: The current language of the user is: English. I need to use a tool to help me answer the question.

Action: company\_docs

Action Input: {'input': 'Lyft revenue 2021'}

Observation: Lyft's revenue in 2021 was primarily generated from its ridesharing marketplace connecting drivers and riders. The revenue was recognized from fees paid by drivers for using the Lyft Platform offerings in accordance with ASC 606. Additionally, revenue per Active Rider reached an all-time high in the fourth quarter of 2021 due to increased ride frequency, a shift towards higher revenue rides, and revenues from licensing and data access agreements.

\> Running step 072a3253-7eee-44e3-a787-397c9cbe80d8. Step input: None

Thought: The current language of the user is English. I need to use a tool to help me answer the question.

Action: company\_docs

Action Input: {'input': 'Lyft revenue 2021'}

Observation: Revenue for Lyft in 2021 was driven by an increase in ride frequency, a shift towards higher revenue rides such as airport rides, and revenues from licensing and data access agreements. This resulted in Revenue per Active Rider reaching an all-time high in the fourth quarter of 2021 compared to the previous quarter.

\> Running step 6494fe6d-27ad-484f-9204-0c4683bfa1c2. Step input: None

Thought: The user is asking for Lyft's revenue in 2021.

Action: company\_docs

Action Input: {'input': 'Lyft revenue 2021'}

Observation: Lyft's revenue in 2021 was primarily generated from its ridesharing marketplace connecting drivers and riders. Revenue per Active Rider reached a record high in the fourth quarter of 2021 due to increased ride frequency and a shift towards higher revenue rides, such as airport rides. Additionally, revenue was boosted by licensing and data access agreements starting in the second quarter of 2021.

\> Running step 0076b6dd-e7d0-45ac-a39a-4afa5f1aaf47. Step input: None

Answer: Observation: Lyft's total revenue in 2021 was $3.4 billion.

Response with metadata filtering:

Observation: Lyft's total revenue in 2021 was $3.4 billion. 07.

使用 LLM 自动创建元数据过滤器

现在,让我们基于用户问题使用 LLM 自动创建元数据过滤器,从而提升 Agent 效率。

from llama\_index.core.prompts.base import PromptTemplate # Function to create a filtered query engine

def create\_query\_engine(question): # Extract metadata filters from question using a language model prompt\_template = PromptTemplate( "Given the following question, extract relevant metadata filters.\\n" "Consider company names, years, and any other relevant attributes.\\n" "Don't write any other text, just the MetadataFilters object" "Format it by creating a MetadataFilters like shown in the following\\n" "MetadataFilters(filters=\[ExactMatchFilter(key='file\_name', value='lyft\_2021.pdf')\])\\n" "If no specific filters are mentioned, returns an empty MetadataFilters()\\n" "Question: {question}\\n" "Metadata Filters:\\n" ) prompt = prompt\_template.format(question=question) llm = Ollama(model="mistral-nemo") response = llm.complete(prompt) metadata\_filters\_str = response.text.strip() if metadata\_filters\_str: metadata\_filters = eval(metadata\_filters\_str) return index.as\_query\_engine(filters=metadata\_filters) return index.as\_query\_engine() 我们可以将上述 Function 整合到 Agent 中。

# Example usage with metadata filtering

question = "What is Uber revenue? This should be in the file\_name: uber\_2021.pdf"

filtered\_query\_engine = create\_query\_engine(question) # Define query engine tools with the filtered query engine

query\_engine\_tools = \[ QueryEngineTool( query\_engine=filtered\_query\_engine, metadata=ToolMetadata( name="company\_docs\_filtering", description=( "Provides information about various companies' financials for year 2021. " "Use a detailed plain text question as input to the tool." ), ), ),

\] # Set up the agent with the updated query engine tools

agent = ReActAgent.from\_tools(query\_engine\_tools, llm=llm, verbose=True) response = agent.chat(question)

print("Response with metadata filtering:")

print(response) 现在,Agent 使用键值file_name 和 uber_2021.pdf 来创建 Metadatafilters。Prompt 越复杂,Agent 能够创建更多高级过滤器。

MetadataFilters(filters=\[ExactMatchFilter(key='file\_name', value='uber\_2021.pdf')\])

<class 'str'>

eval: filters=\[MetadataFilter(key='file\_name', value='uber\_2021.pdf', operator=<FilterOperator.EQ: '=='>)\] condition=<FilterCondition.AND: 'and'>

\> Running step a2cfc7a2-95b1-4141-bc52-36d9817ee86d. Step input: What is Uber revenue? This should be in the file\_name: uber\_2021.pdf

Thought: The current language of the user is English. I need to use a tool to help me answer the question.

Action: company\_docs

Action Input: {'input': 'Uber revenue 2021'}

Observation: $17,455 million 08.

使用 Mistral Large 作为编排系统

Mistral Large 是一款比 Mistral Nemo 更强大的模型,但它也会消耗更多资源。如果仅将其用作编排器,我们可以节省部分资源,同时享受智能 Agent 带来的便利。

为什么使用 Mistral Large?

Mistral Large是Mistral AI推出的旗舰型号,具有顶级推理能力,支持复杂的多语言推理任务,包括文本理解、转换和代码生成,非常适合需要大规模推理能力或高度专业化的复杂任务。其先进的函数调用能力正是我们协调不同 Agent 时所需的功能。

我们无需针对每个任务都使用一个重量级的模型,这会对我们的系统造成负担。我们可以使用 Mistral Large 指导其他 Agent 进行特定的任务。这种方法不仅优化了性能,还降低了运营成本,提升系统可扩展性和效率。

Mistral Large 将充当中央编排器的角色,协调由 Llama-agents 管理的多个 Agent 活动:

-

Task Delegation(分派任务):当收到复杂查询时,Mistral Large 确定最合适的 Agent 和工具来处理查询的各个部分。

-

Agent Coordination(代理协调):Llama-agents 管理这些任务的执行情况,确保每个 Agent 接收到必要的输入,且其输出被正确处理和整合。

-

Result Synthesis(综合结果):Mistral Large 然后将来自各个 Agent 的输出编译成一个连贯且全面的响应,确保最终输出大于其各部分的总和。

Llama Agents

将 Mistral Large 作为编排器,并使用 Agent 生成回答。

from llama\_agents import ( AgentService, ToolService, LocalLauncher, MetaServiceTool, ControlPlaneServer, SimpleMessageQueue, AgentOrchestrator,

) from llama\_index.core.agent import FunctionCallingAgentWorker

from llama\_index.llms.mistralai import MistralAI # create our multi-agent framework components

message\_queue = SimpleMessageQueue()

control\_plane = ControlPlaneServer( message\_queue=message\_queue, orchestrator=AgentOrchestrator(llm=MistralAI('mistral-large-latest')),

) # define Tool Service

tool\_service = ToolService( message\_queue=message\_queue, tools=query\_engine\_tools, running=True, step\_interval=0.5,

) # define meta-tools here

meta\_tools = \[ await MetaServiceTool.from\_tool\_service( t.metadata.name, message\_queue=message\_queue, tool\_service=tool\_service, ) for t in query\_engine\_tools

\] # define Agent and agent service

worker1 = FunctionCallingAgentWorker.from\_tools( meta\_tools, llm=MistralAI('mistral-large-latest')

) agent1 = worker1.as\_agent()

agent\_server\_1 = AgentService( agent=agent1, message\_queue=message\_queue, description="Used to answer questions over differnet companies for their Financial results", service\_name="Companies\_analyst\_agent",

) import logging # change logging level to enable or disable more verbose logging

logging.getLogger("llama\_agents").setLevel(logging.INFO) ## Define Launcher

launcher = LocalLauncher( \[agent\_server\_1, tool\_service\], control\_plane, message\_queue,

) query\_str = "What are the risk factors for Uber?"

print(launcher.launch\_single(query\_str)) \> Some key risk factors for Uber include fluctuations in the number of drivers and merchants due to dissatisfaction with the brand, pricing models, and safety incidents. Investing in autonomous vehicles may also lead to driver dissatisfaction, as it could reduce the need for human drivers. Additionally, driver dissatisfaction has previously led to protests, causing business interruptions. 09.

总结

本文介绍了如何使用 Llama-agents 框架创建和使用代理,该框架由 Mistral Nemo 和 Mistral Large 两个不同的大语言模型驱动。我们展示了如何利用不同 LLM 的优势,有效协调资源,搭建一个智能、高效的系统。

如何学习大模型

现在社会上大模型越来越普及了,已经有很多人都想往这里面扎,但是却找不到适合的方法去学习。

作为一名资深码农,初入大模型时也吃了很多亏,踩了无数坑。现在我想把我的经验和知识分享给你们,帮助你们学习AI大模型,能够解决你们学习中的困难。

我已将重要的AI大模型资料包括市面上AI大模型各大白皮书、AGI大模型系统学习路线、AI大模型视频教程、实战学习,等录播视频免费分享出来,需要的小伙伴可以扫取。

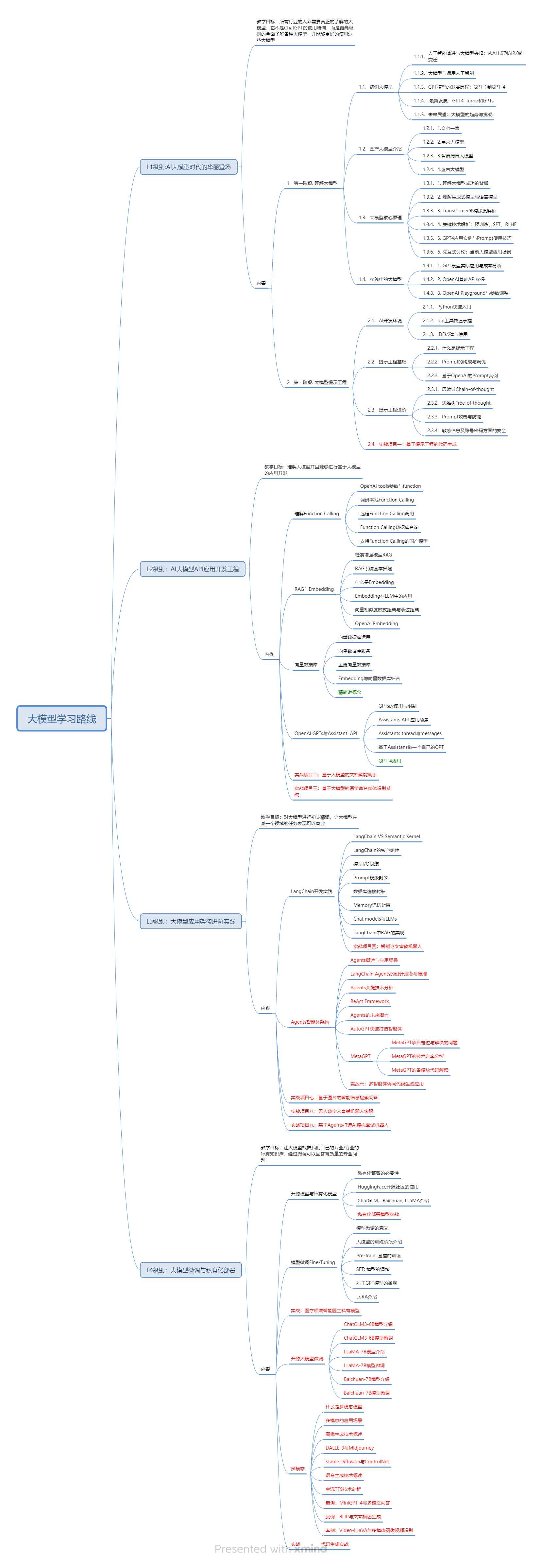

一、AGI大模型系统学习路线

很多人学习大模型的时候没有方向,东学一点西学一点,像只无头苍蝇乱撞,我下面分享的这个学习路线希望能够帮助到你们学习AI大模型。

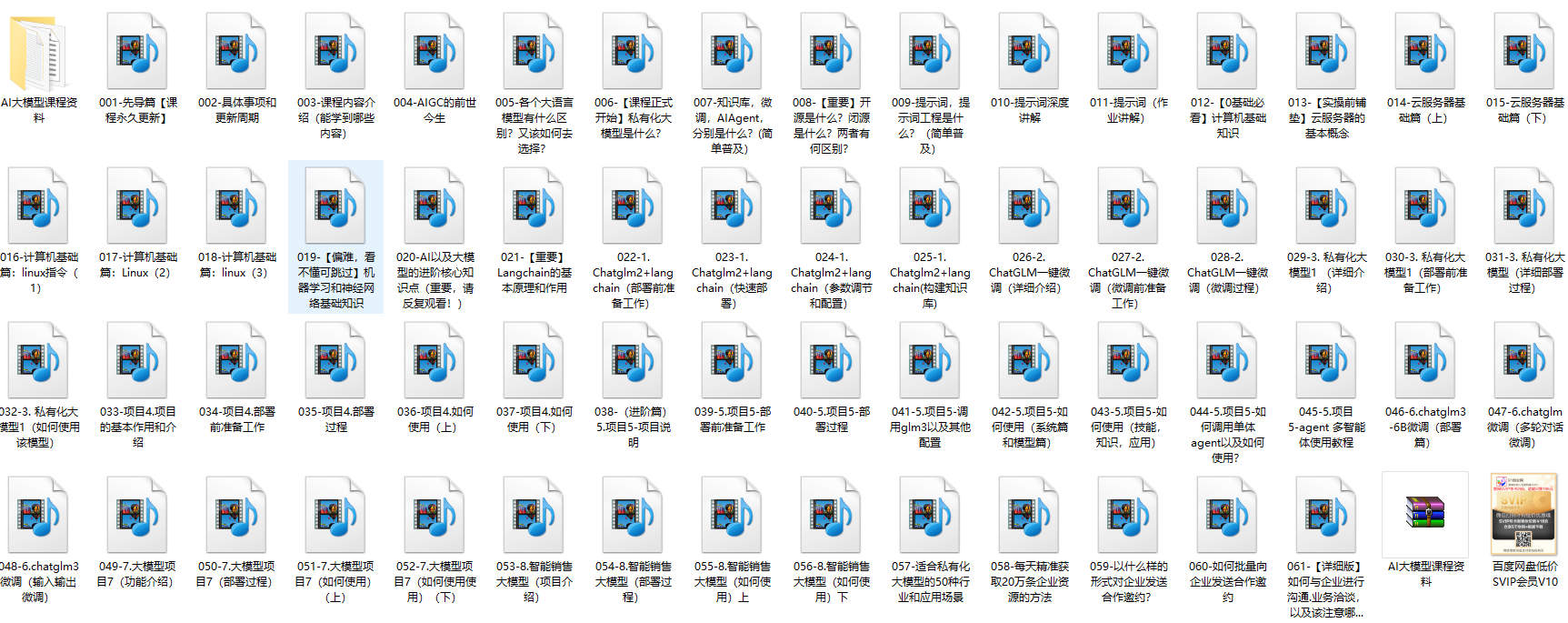

二、AI大模型视频教程

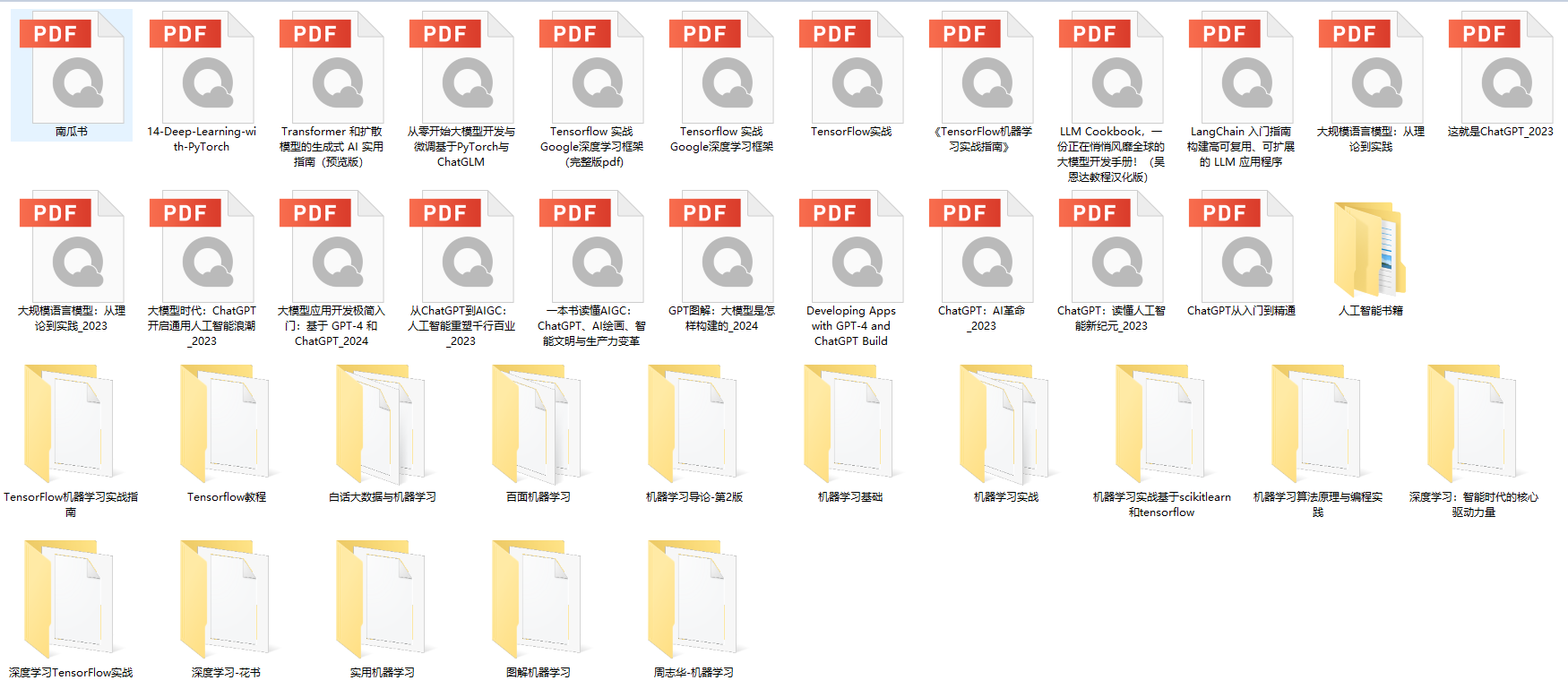

三、AI大模型各大学习书籍

四、AI大模型各大场景实战案例

五、结束语

学习AI大模型是当前科技发展的趋势,它不仅能够为我们提供更多的机会和挑战,还能够让我们更好地理解和应用人工智能技术。通过学习AI大模型,我们可以深入了解深度学习、神经网络等核心概念,并将其应用于自然语言处理、计算机视觉、语音识别等领域。同时,掌握AI大模型还能够为我们的职业发展增添竞争力,成为未来技术领域的领导者。

再者,学习AI大模型也能为我们自己创造更多的价值,提供更多的岗位以及副业创收,让自己的生活更上一层楼。

因此,学习AI大模型是一项有前景且值得投入的时间和精力的重要选择。